IBM Granite 4.0: A Hybrid LLM for Healthcare AI

[Revised February 20, 2026]

IBM Granite 4.0: A New Open-Source LLM for Enterprise and Healthcare

IBM unveiled Granite 4.0 in October 2025 as the next generation of its open-source language models, aimed at enterprise AI. Granite 4.0 introduces a novel hybrid Mamba-2/Transformer architecture that dramatically reduces GPU memory needs (over 70% less) while maintaining high performance ([1]). According to IBM, these models "can be run on significantly cheaper GPUs and at significantly reduced costs compared to conventional LLMs" ([2]). The rollout includes multiple model sizes: Granite-H-Small (32B total parameters, 9B active) for heavy-duty tasks, Granite-H-Tiny (7B/1B) for low-latency needs, 3B variants (dense and hybrid) for edge use, and the ultra-compact Granite 4.0 Nano series (350M and 1B parameters) released in late October 2025 for on-device and browser-based inference ([3]). IBM has also announced forthcoming Thinking variants optimized for complex reasoning tasks, and a Medium-sized model to fill the gap between Small and Tiny ([2]). All Granite 4.0 models are released under the permissive Apache 2.0 license, and – notably – are the first open LLMs certified under ISO 42001, with cryptographic signing to guarantee integrity and governance ([4]). These features signal IBM's focus on security and transparency, important for sensitive domains.

Key Features of Granite 4.0

- Hybrid Mamba/Transformer architecture: Uses a mixture-of-experts approach in some variants to activate only a fraction of parameters per input. This yields “>70% lower memory requirements and 2× faster inference” than comparable models ([1]). Such efficiency makes Granite 4.0 well-suited for long-context and multi-session tasks with lower hardware costs.

- Range of model sizes: The family spans from a 32B-parameter "Small" model (9B active) for intensive tasks like retrieval-augmented generation or question-answering, a 7B "Tiny" model (1B active) for low-latency use, 3B models (hybrid and dense) for quick tasks, and the ultra-compact Nano series (350M and ~1B parameters) for on-device and edge inference ([1]). The Nano models are small enough to run in a web browser yet deliver solid tool-use and instruction-following capabilities ([3]).

- Open source and certified: All Granite 4.0 models are open-sourced under Apache 2.0, enabling customization and on-premise deployment. IBM emphasizes that these are the "world's first open models to receive ISO 42001 certification", and they are cryptographically signed to enforce best practices in security and governance ([4]).

- Expanding ecosystem – Vision, Guardian, and Thinking: IBM has complemented Granite 4.0 with related models. Granite Vision 3.2 (released February 2025) is a 2B-parameter vision-language model fine-tuned on 13.7 million enterprise document pages, excelling at extracting data from tables, charts, and scanned documents ([5]). Granite Guardian provides safety guardrails that detect risks like social bias, hallucination, and privacy leaks in LLM outputs – critical for healthcare deployments ([6]). IBM has also announced Granite 4.0 Thinking models, which separate reasoning capabilities from instruction-following for improved complex logic performance ([2]).

- Wide availability: Granite 4.0 is accessible via IBM's watsonx.ai platform and distributed through partners (e.g. Dell, Hugging Face, Kaggle, Docker Hub, Replicate), making it easy for organizations to experiment and deploy these models.

Together, these advances mean Granite 4.0 can deliver enterprise-grade AI performance at reduced cost and risk.

Healthcare Applications

Granite 4.0’s strengths – efficiency, openness, and security – make it well-suited for healthcare AI. Large language models in medicine can assist with tasks like summarizing patient data, aiding diagnosis, and automating administrative work. In fact, recent studies highlight the growing capabilities of open LLMs in clinical settings. For example, a Harvard-led study found that a state-of-the-art open-source model (Llama 3.1) matched GPT-4’s performance on 92 challenging medical cases ([7]). This suggests doctors and hospitals could one day use models like Granite for diagnostic support (always with clinician oversight) without relying on black-box APIs.

In practical terms, Granite 4.0 could power many healthcare use-cases:

- Clinical documentation and coding: Automating the creation of patient notes, discharge reports, and billing codes. Studies show LLMs can “automate numerous tasks in healthcare administration” such as note-taking, drafting patient/diagnostic reports, and data summarization ([8]). For instance, Granite could ingest a patient’s chart and generate concise summaries or highlight key findings, greatly reducing the manual workload on doctors and nurses ([8]) ([9]). It could also suggest medical procedure or diagnosis codes based on a visit, helping reduce billing errors ([8]).

- Clinical decision support: Assisting clinicians by retrieving relevant medical knowledge or proposing possible diagnoses and treatment options. An LLM might scan the latest guidelines and patient history to recommend next steps. The Harvard study implies that open models are already capable of deep clinical reasoning ([7]). Granite 4.0’s efficiency allows it to be deployed on-premise or in edge settings (e.g. clinic servers or dedicated GPUs) where it can process sensitive data without lag.

- Patient interaction and triage: Granite-based chatbots could answer patient questions (scheduling, medication instructions) or perform symptom triage, providing consistent information 24/7. Because Granite 4.0 can be run privately, such bots could handle patient health inquiries using up-to-date internal protocols. The model’s small footprint means even in rural clinics or at-home devices it could function without cloud calls, improving availability.

- Research and knowledge access: Helping clinicians and researchers by summarizing medical literature, extracting key findings from journals or clinical trial reports, and generating draft outlines of research proposals. Granite Vision's document-understanding capabilities could be especially useful here, parsing complex tables and figures from published studies to speed evidence-based practice.

- Real-world deployment example: In April 2025, KPJ Healthcare (Malaysia's largest private healthcare network, with 30+ specialist hospitals) partnered with IBM to deploy a watsonx-powered AI chatbot for 24/7 patient services, including FAQ responses, specialist information, and appointment scheduling ([10]). This illustrates the practical viability of IBM's AI stack in clinical settings, and as Granite 4.0 models become the backbone of watsonx.ai, such deployments stand to benefit from the efficiency and cost improvements of the hybrid architecture.

Importantly, privacy and compliance are paramount in healthcare. One big advantage of Granite being open-source is that hospitals can host it locally with patient data on-site. As Harvard researchers note, an open model “can be downloaded and run on a hospital’s private computers, keeping patient data in-house” ([11]). This avoids sending PHI to external servers (as many proprietary AIs require) – a concern for CIOs and clinicians alike ([11]). Industry experts recommend exactly this approach: “You have three compliant options: self-host an open-source LLM, use HIPAA-eligible cloud platforms, or go with a healthcare-focused AI vendor,” with self-hosting providing “full control and privacy” over data ([12]). Granite’s ISO certification and cryptographic signing ([4]) further reinforce trust, aligning with the stringent governance needed in hospitals.

Example healthcare use-cases with Granite 4.0: IBM’s community has even suggested scenarios like using Granite in IBM Watson Health tools. For instance, clinical decision support systems could plug in Granite to interpret lab results or draft patient letters, and medical research platforms could use Granite to sift through genomic data or literature. A proposed list of use cases (by IBM champions) includes Granite-powered summarizers for patient records, Granite-driven health coaching bots, and even Granite-assisted pharmaceutical data analysis and regulatory compliance checks. (While these ideas are aspirational, they illustrate the variety of tasks LLMs can handle.)

Benefits and Cautions

Because Granite 4.0 models are much lighter to run, they make it feasible for smaller clinics or mobile health units to use advanced AI without massive hardware. The Nano series (350M–1B parameters) can run directly in a browser or on edge devices, while the 3B-parameter variants fit in ~4GB of memory, enabling deployment on devices like a Raspberry Pi ([1]). This could democratize AI-driven care in resource-constrained settings.

IBM's Granite Guardian safety models add another layer of protection for healthcare use. These companion models detect risks such as social bias, hallucination, violence, and privacy leaks in LLM outputs, and can be deployed alongside Granite 4.0 to enforce guardrails in clinical workflows ([6]). The ability to audit an open-source model's training data and monitor its outputs with a dedicated safety model helps address concerns about AI unpredictability – a major barrier to adoption in healthcare.

At the same time, experts caution that any AI in medicine must be used with care. LLMs are prone to "hallucinations" (making up facts) and can reflect biases in their training data. As a review notes, LLMs have "transformative potential in medicine" but require "careful integration into healthcare settings" ([13]). The regulatory landscape is also evolving: as of mid-2025, the FDA has authorized over 1,250 AI-enabled medical devices, and its January 2025 draft guidance on AI-enabled device software functions signals increasing scrutiny of AI in clinical workflows ([14]). In practice, Granite 4.0 should augment – not replace – clinician judgment. Workflows will need verification steps (for example, pairing Granite with Granite Guardian to flag uncertain outputs, or confirming data against records) to ensure safety.

Conclusion

IBM Granite 4.0 represents a significant step in enterprise AI, and its open-source, efficient design makes it an attractive platform for healthcare applications. With its reduced memory needs and cryptographic safeguards ([4]) ([1]), Granite 4.0 can run powerful language AI tools at lower cost and under institutional control. The expanding ecosystem – including Granite Vision for document understanding, Granite Guardian for safety guardrails, and forthcoming Thinking models for complex reasoning – provides a comprehensive toolkit for healthcare organizations. Real-world deployments like KPJ Healthcare's watsonx-powered patient chatbot demonstrate that IBM's AI stack is already being used in clinical settings ([10]).

However, success will rely on rigorous clinical validation and proper guardrails: as one study emphasizes, Granite-powered systems can be invaluable co-pilots for clinicians, but only if deployed with physician oversight ([11]) ([15]). As Granite 4.0 continues to expand across IBM's watsonx platform and partner ecosystems, we can expect healthcare teams to increasingly experiment with Granite-driven AI – for example, fine-tuning it on local medical records to create HIPAA-compliant assistants, pairing it with Granite Guardian for safe clinical decision support, or using Granite Vision to digitize and analyze medical documents. Granite 4.0's combination of efficiency, openness, and certification makes it uniquely suited to meet the strict requirements of medical AI, from research to bedside care ([11]) ([12]).

Sources: IBM's Granite 4.0 announcement and documentation ([2]) ([1]), Granite Nano release ([3]), Granite Guardian ([6]), Granite Vision ([5]), KPJ Healthcare deployment ([10]), FDA AI-enabled devices ([14]), recent medical AI research ([7]) ([9]) ([8]), and industry guidance on AI in healthcare ([12]) ([13]).

External Sources (15)

Get a Free AI Cost Estimate

Tell us about your use case and we'll provide a personalized cost analysis.

Ready to implement AI at scale?

From proof-of-concept to production, we help enterprises deploy AI solutions that deliver measurable ROI.

Book a Free ConsultationHow We Can Help

IntuitionLabs helps companies implement AI solutions that deliver real business value.

AI Strategy Consulting

Navigate model selection, cost optimization, and build-vs-buy decisions with expert guidance tailored to your industry.

Custom AI Development

Purpose-built AI agents, RAG pipelines, and LLM integrations designed for your specific workflows and data.

AI Integration & Deployment

Production-ready AI systems with monitoring, guardrails, and seamless integration into your existing tech stack.

DISCLAIMER

The information contained in this document is provided for educational and informational purposes only. We make no representations or warranties of any kind, express or implied, about the completeness, accuracy, reliability, suitability, or availability of the information contained herein. Any reliance you place on such information is strictly at your own risk. In no event will IntuitionLabs.ai or its representatives be liable for any loss or damage including without limitation, indirect or consequential loss or damage, or any loss or damage whatsoever arising from the use of information presented in this document. This document may contain content generated with the assistance of artificial intelligence technologies. AI-generated content may contain errors, omissions, or inaccuracies. Readers are advised to independently verify any critical information before acting upon it. All product names, logos, brands, trademarks, and registered trademarks mentioned in this document are the property of their respective owners. All company, product, and service names used in this document are for identification purposes only. Use of these names, logos, trademarks, and brands does not imply endorsement by the respective trademark holders. IntuitionLabs.ai is an AI software development company specializing in helping life-science companies implement and leverage artificial intelligence solutions. Founded in 2023 by Adrien Laurent and based in San Jose, California. This document does not constitute professional or legal advice. For specific guidance related to your business needs, please consult with appropriate qualified professionals.

Related Articles

Mistral Large 3: An Open-Source MoE LLM Explained

An in-depth guide to Mistral Large 3, the open-source MoE LLM. Learn about its architecture, 675B parameters, 256k context window, benchmark performance, and 2026 ecosystem developments including Forge, Small 4, and Voxtral TTS.

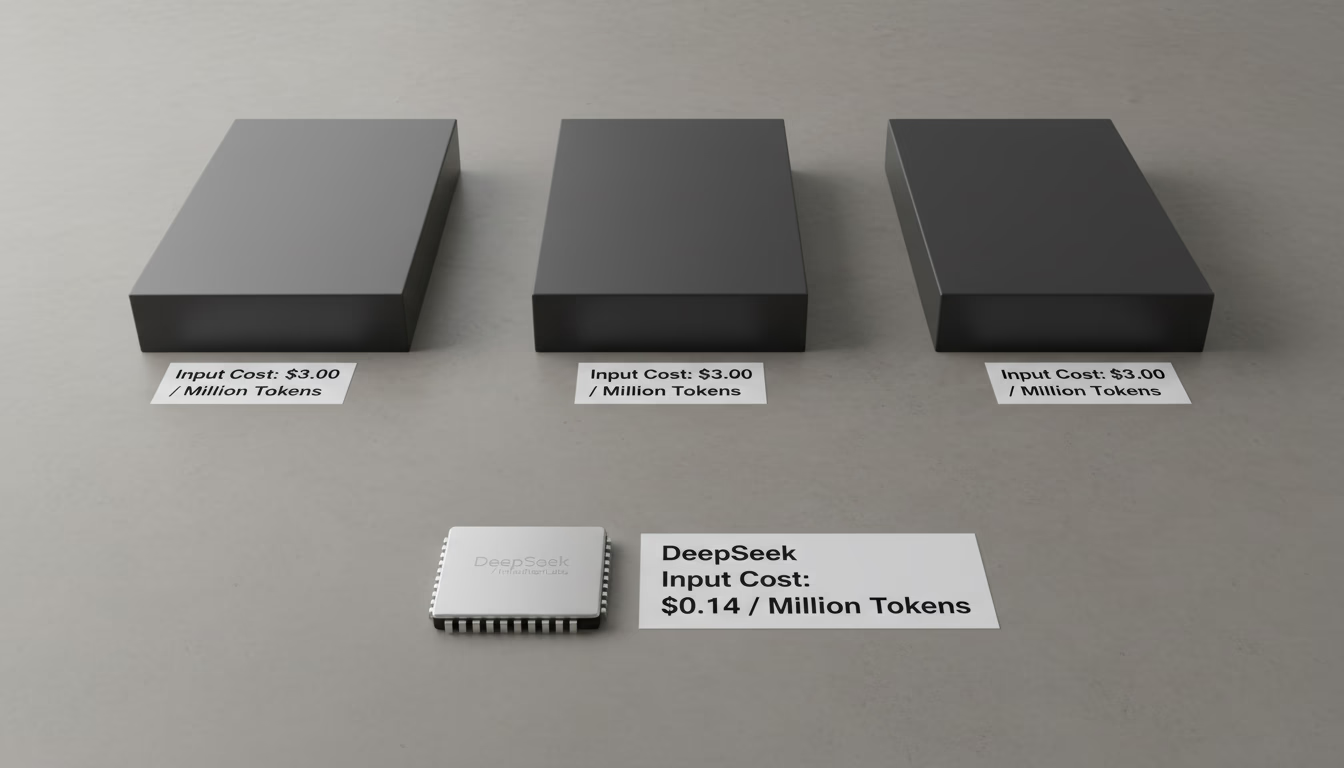

DeepSeek's Low Inference Cost Explained: MoE & Strategy

Learn why DeepSeek's AI inference is up to 50x cheaper than competitors. This analysis covers its Mixture-of-Experts (MoE) architecture and pricing strategy.

GLM-4.6: An Open-Source AI for Coding vs. Sonnet & GPT-5

An analysis of GLM-4.6 and its successor GLM-5, the leading open-source coding models. Compare benchmarks against Claude Opus 4.6, Sonnet 4.6, and GPT-5.3-Codex, plus hardware requirements